Alan Kay says that Xerox PARC bought its way into the future by paying lots of money for each computer. Today, you can (almost) buy your way into the future of mobile computers by paying small amounts of money for lots of computers. This is the story of the other things that need to happen to get into the future.

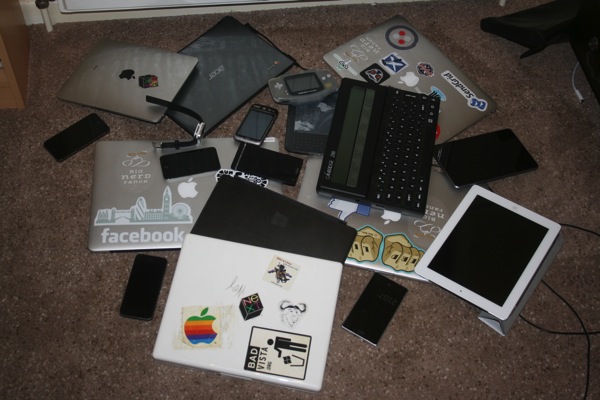

I own rather a few portable computers.

Such a photo certainly puts me deep into a long tail of interwebbedness. However, let me say this: within a couple of decades this photo will look reasonable, for the number of portable computers in one office room. Faster networks will enable more computers in a single location, and that will be an interesting source of future applications.

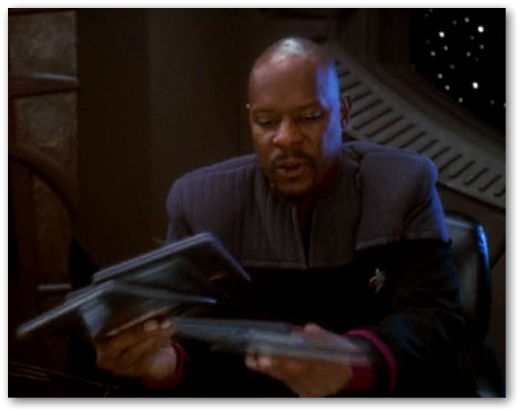

Here’s the distant future. The people in this are doing something that today’s people do not do so readily.

One of them is giving his PADD to the other. Is that because PADDs are super-expensive, so everybody has to share them? No: it’s because they’re so cheap, it’s easy to give one to someone who needs the information on it and to print/replicate/fax/magic a new one.

We can’t do that today. Partly it’s because the devices are pretty expensive. But their value isn’t just associated with their monetary worth: they’ve got so many personalisations, account settings and stored credentials on them that the idea of giving an unlocked device to someone else is unconscionable to many people. The trick is not merely to make them cheap, but to make them disposable.

Disposable pad computers also solve another problem: that of how to display multiple views simultaneously on the pad screen.

You can go from rearranging metaphorical documents on a metaphorical desktop, back to actually arranging documents on a desktop. Rather than fighting with admittedly ingenious split-screen UI, you can just put multiple screens side by side.

The cheapest tablet computer I’m aware of that’s for sale near me is around £30, but that’s still too expensive. When they’re effectively free, and when it’s as easy to give them away as it is to use them, then we’ll really be living in the future.

Just as it started, this post ends with Xerox PARC, and the inspiration for this post: